'Overstretched' valuations, and Anthropic growing 50% in a few weeks... you ain't seen nothing yet

Part 1 of the AI 2030 series: Modelling out Anthropic's revenue growth to try and size the 2030 AI economy

Anthropic’s annualised run-rate went from $30 billion in April to $45 billion in early May. Roughly $15 billion of net ARR added in three weeks.

The market has noticed. Sceptics are everywhere now, with many calling tops warning that AI infrastructure is wildly overstretched, that the bubble is forming, that the maths doesn’t work.

I engaged with the bubble question directly back in November, and have so far been proven to be somewhat on the money.

The framework I used then was simple: own the bottleneck.

Five months on, and after huge gains in AI infrastructure stocks, Anthropic’s $15B of net ARR added in three weeks tells me the bottleneck is not going anywhere.

In fact, the last 6 months has been crazy, yet I honestly think you aint seen nothing yet.

I think Anthropic specifically has a credible path to $1 trillion ARR by 2034, with $600 billion as my base case for 2030.

Two reasons:

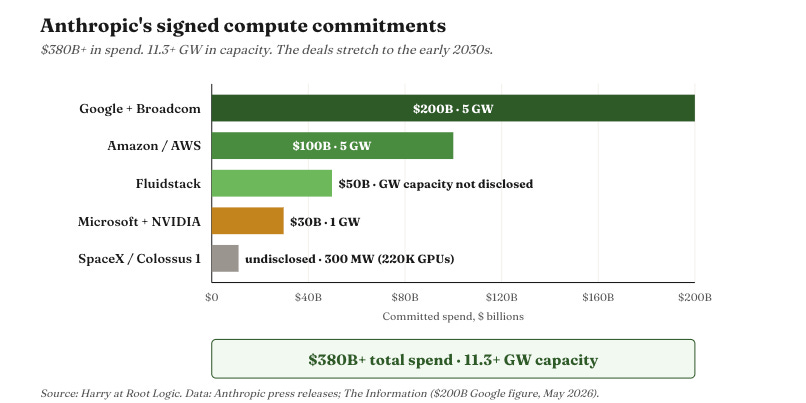

First, the compute commitments. Anthropic has signed more than $380 billion in compute spend and over 11 gigawatts of capacity. Amazon. Google. Microsoft. NVIDIA. Fluidstack. SpaceX. The deals stretch to the early 2030s. To put 11 GW in context: that’s roughly the entire installed electricity generation capacity of Ireland. Five times the United Kingdom’s total data centre footprint today, and nearly twice what the UK government has set as its most ambitious 2030 target.

Second, the maths. To make commitments of this scale economic at reasonable inference margins, Anthropic needs annual revenue somewhere north of $300 billion by the end of the decade. The contracts force the trajectory. There is no scenario in which Anthropic signs these deals and the revenue doesn’t materially follow - short of bankruptcy, which Amazon, Google, and the rest of the consortium would have to have wildly miscalibrated to allow.

So that’s where we are. $45 billion ARR today. A revenue requirement north of $300 billion by 2030. A stated ambition of $1 trillion by 2034.

This isn’t a moonshot call, it’s a data driven, evidence based, logical conclusion.

And it is the calibrated framework I anchor every AI infrastructure valuation on.

Power. Compute. Memory. Networking. Robotics. Every name in the trade - Nebius, IREN, Micron, the hyperscalers - only makes sense at current multiples if the frontier AI labs hit certain revenue thresholds. In my opinion, Anthropic specifically clears those thresholds. By a lot.

That’s what I’m going to spend the rest of the article on. Let me show you the argument, and you can tell me how crazy I am at the end….

Here’s what I’ll cover

Why AGI by 2029 is the assumption everything else depends on

The maths the $380 billion of contracts force on Anthropic

How four revenue lines build to $600B by 2030, and $1T not long after

The bears I take seriously

What this means for the rest of the AI infrastructure trade

The step change: why AGI by 2029 is what makes these numbers rational

I think AGI by 2029 is a sensible take.

Everything I’m about to argue rests on that - so let me explain why I think it, before I ask you to follow me into the maths.

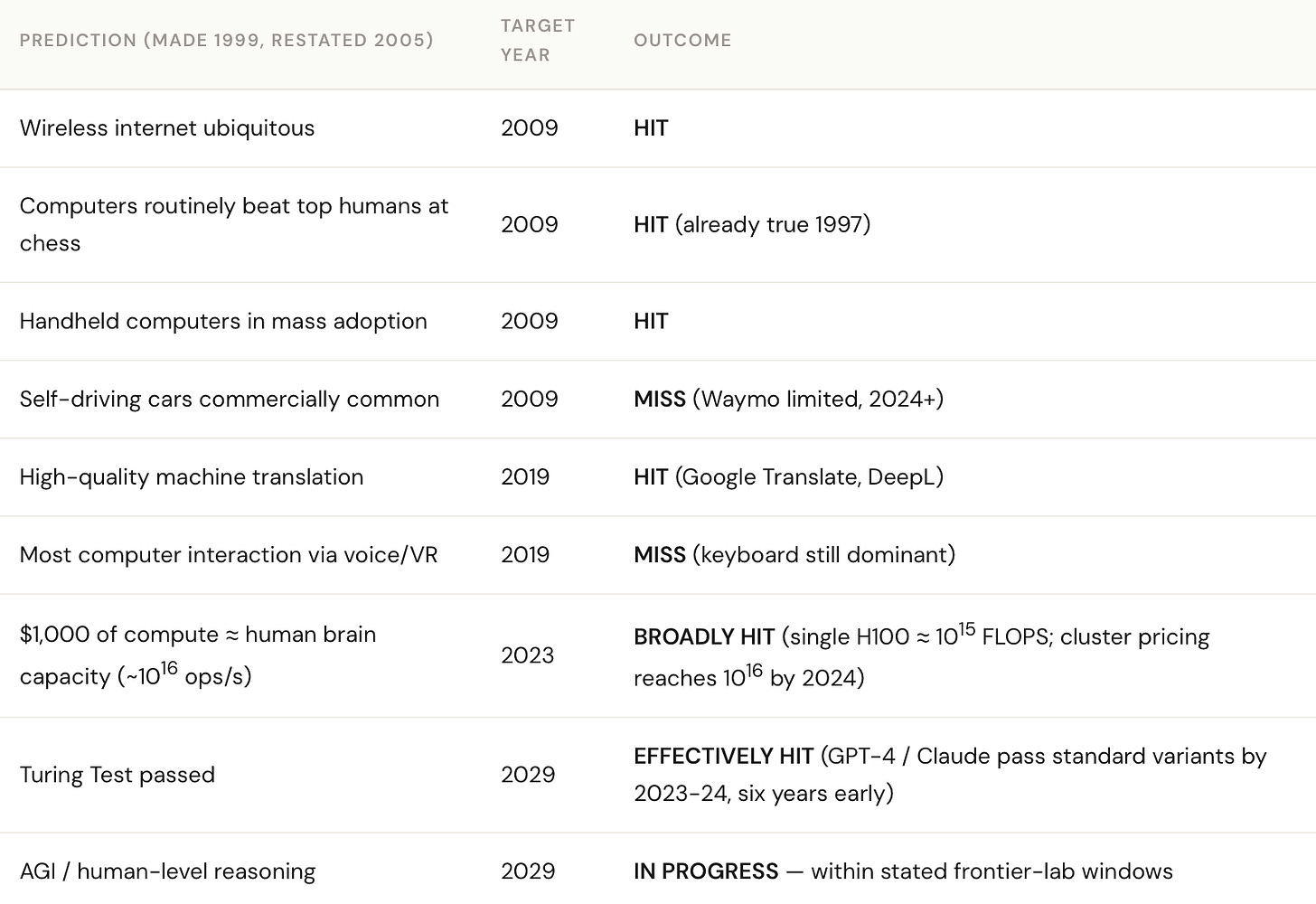

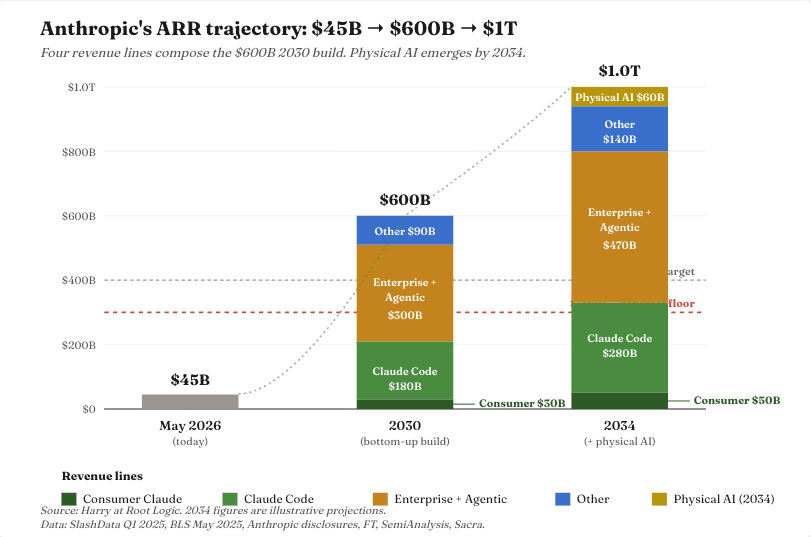

The core reason is that three independent forecasts arrive within this range from completely different starting points - and Ray Kurzweil who I’ve mentioned many times on substack, pinpoints this exact date with a decent track record. See below:

Ray Kurzweil in 1999, anchored to a Law of Accelerating Returns argument about compute curves, lands at 2029. Dario Amodei in October 2024 (Machines of Loving Grace), anchored to scaling-law extrapolation from training runs inside Anthropic, lands at 2026-27. Demis Hassabis in 2024, anchored to capability benchmarks at DeepMind, lands at 2029-34.

Three methodologies. Three starting points, decades apart. Convergence on a four-to-seven-year window.

Three independent forecasts converging on the same window isn’t easy for the bear case to dismiss.

And it’s what makes Anthropic’s compute commitments rational rather than reckless. If AGI is the destination, then the binding constraint on every quarter between now and then is compute. You buy as much of it as your balance sheet supports. You amortise it over a horizon that ends in a regime change - not a normal capex cycle. If you can place a bet at 100/1 that you think will almost certainly win, you make the opportunity count.

There’s a second-order point that matters more for the revenue maths. AGI doesn’t just change the capability of frontier models - it changes the unit of revenue.

Today’s per-token API pricing is built on the assumption that a model is a tool a human operates. Once a model can operate as an agent - running 24/7, replacing the marginal hour of a knowledge worker - the natural unit of revenue stops being tokens and starts being labour replacement at a fraction of the human cost.

Per-token billing collapses into per-task billing collapses into per-FTE-equivalent billing. The price per unit of useful work goes down. The number of units of useful work goes up by orders of magnitude.

That’s why I’m explicit about AGI sitting at the centre of the build. If you don’t buy AGI by 2029, in a 24/7 persistant agent world, the velocity in the numbers below doesn’t hold.

The agentic services line, in particular, requires it. Reasonable people disagree on whether AGI actually arrives in that window. I think it does. My 2030 hypothesis assume it does, and the bears section explicitly engages with what happens if I’m wrong.

What those commitments mathematically require

So Anthropic has signed up for $380 billion of compute spend through to roughly 2031. What annual revenue does that imply?

These ramps will be heavily back-loaded as capacity comes online — real spend in 2026 is much lower than the annual average, real spend in 2030 much higher. But annualised, here’s what Anthropic has signed up for:

Amazon’s $100 billion across ten years. That’s roughly $10 billion of compute opex per year at full deployment.

Google’s $200 billion across five years from 2027. $40 billion per year at peak.

Microsoft’s $30 billion across roughly the same window. $6 billion per year.

Fluidstack’s $50 billion across data centre construction and operation. Probably $10 billion per year at peak.

SpaceX undisclosed but small relative to the others.

Total: somewhere around $60-70 billion a year on compute by 2028, ramping higher into 2029-30 as Google’s commitment hits full velocity.

Now apply basic unit economics. Anthropic’s inference gross margins are currently around 70% (SemiAnalysis, May 2026). If that holds - and the trend is steeply upward, not flat - then for compute to be 30% of revenue, you need:

$60-70 billion compute spend ÷ 30% = $200-230 billion ARR floor in 2028

That’s the bare minimum for the contracts to be marginally economic, and uses Anthropics current unit economics.

For context: $45 billion to $200-230 billion in roughly four years. Steep, but Anthropic’s ARR grew 5x from $9 billion to $45 billion in the five months between December and May this year. The growth rate isn’t the question - it’s whether it holds, and whether the compute will come online quick enough.

If enough compute comes online in time, and margins increase, the 230 billion in 2028 isn’t the interesting number - it’s the $600bn in 2030 and the $1 trillion not long after.

Here’s how it happens.

Building Anthropic’s $1 trillion from the bottom up

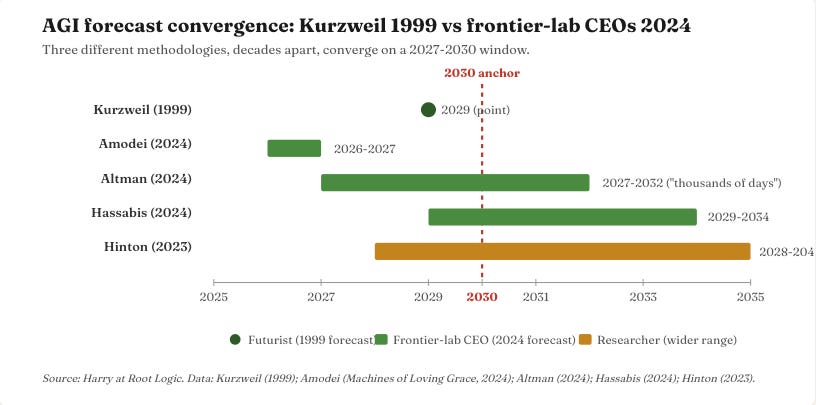

Four lines. $600 billion by 2030 on the existing product surface. Then a path to $1 trillion by 2034, assuming physical AI is barely captured by Anthropic. The build below.

I’ll walk each of the four lines below. The big one - Enterprise + Agentic at $300B - gets the most space. Claude Code, also load-bearing at $180B. Then Consumer and Other.

Consumer Claude — $30 billion

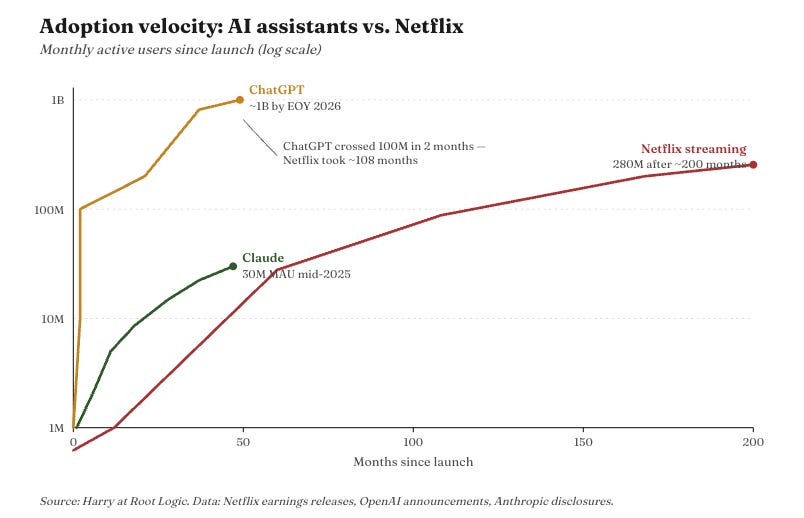

Claude.ai is at roughly 12.5 million monthly app users today (AICPB, February 2026), up 49% month-on-month. The web property does another 288 million visits per month. Real traction. But this is the smallest line in the build, and it’s worth being honest about why.

Anthropic isn’t a consumer company. Their disclosed revenue mix is 80% enterprise. They’ve actively de-prioritised B2C - no advertising at scale, no celebrity endorsements, no integrations with consumer platforms. They’ve built a B2B model and they’re doubling down on it.

Claude is #2 or #3 in consumer mindshare. ChatGPT is the verb. Gemini is bundled into a billion Google accounts. Claude is the “smart person’s chat” - high quality, low brand awareness outside tech. By 2030 that improves, but it doesn’t flip.

Here’s the streaming analogy. Ten years ago, paying for four streaming services felt absurd. Today the average household pays for three or four - Netflix, Disney+, HBO Max, Apple TV+.

AI subscriptions are heading the same way.

By 2030, paying for ChatGPT, Claude, and Gemini Pro at once won’t feel exotic - it’ll feel like normal household tech spend. Each subscription has its own use case, its own model strengths. But Claude is the Apple TV+ of that stack, not the Netflix.

The math: ChatGPT plausibly hits ~200M paid users by 2030 (4x today, on its current trajectory). Claude captures roughly half of that paid base - call it 100 million paid subscribers. At $300 ARPU per year (~$25/month blended), that’s $30 billion.

Could go higher if Anthropic pivots harder to consumer. Could go lower if they double down on enterprise. $30B is the honest base case.

This is the line where AGI matters most. If models stay at “good chat assistant” capability, the number is too high. If they reach “knowledge worker substitute”, it’s too low, and right now we cannot even theorise what the use cases will be.

Claude Code — $180 billion

The most empirically anchored line on the list. And, as it turns out, probably the most underweighted.

Claude Code went from public launch in May 2025 to $2.5 billion ARR by February 2026. Anthropic disclosed in April that weekly active users had doubled since 1 January and that business subscriptions had quadrupled in the same window. The average developer using Claude Code now spends 20 hours a week with it according to Anthropics CEO. Roughly 4% of all public GitHub commits are now Claude Code-authored.

Here’s the size of the denominator. The global professional software engineer population is roughly 36.5 million today, projected to reach 45 million by 2030.

Weighted by regional compensation $140K average in the US, $65K in Western Europe, $35K in China, $15K in India, $25K across the rest of the world - the global engineer compensation pool sits at roughly $1.9T today, projected to ~$3T by 2030.

You see where im going with this back of the envelope arithmatic…

By 2030, Claude Code isn’t competing for developer wallet share. It’s substituting for engineering hours directly. If 20% of that work shifts to Claude Code, and Anthropic captures it at the same 30% of human-cost rate I’m using for the agentic services line, you get:

$3.0T × 20% × 30% = $180 billion

Claude Code crossing 20% of global engineering work by 2030 sounds aggressive in isolation. It isn’t. Claude Code today is at roughly 0.13% capture against the comp pool. Reaching 20% in four years requires roughly 3.5x annual growth. Anthropic’s disclosed WAU doubling between January and April this year is 3.7x annualised, and business subscription growth in the same window is over 4x annualised. The current trajectory already exceeds what 20% capture requires.

At $180B, this stops being a “developer productivity tool” line. It becomes Anthropic’s most direct claim on engineering labour substitution - and the most defensible line in the build, because the product, the customers, and the trajectory all already exist today.

Enterprise + Agentic — $300 billion

The biggest single line in the build. And the one that captures the broadest shift in how white-collar work gets done.

Two product motions sit inside this number.

Workflow augmentation is the per-seat enterprise license model - marketing teams, lawyers, analysts, finance, ops using Claude as the tool they reach for in the way they currently reach for Microsoft Office. Human in the loop, $20-100/seat/month enterprise pricing. Sure the model might be different in the end but lets work with this for now.

Persistent agentic services is the always-on, human-out-of-the-loop motion - customer service bots running 24/7, procurement agents auto-negotiating supplier contracts, sales SDRs powered by Claude doing outbound, internal IT help desks, contract review pipelines.

Why these are one item in my model, not two: the two products are converging. A “seat” today becomes “a seat with agent capabilities” tomorrow becomes “the agent is the seat” the year after. Anthropic’s enterprise customers spending $1M+ aren’t buying chat licenses - they’re buying agent platforms. The transition is already happening.

The empirical anchor is strong. Eight of the Fortune 10 are now Claude customers. Over 1,000 enterprise customers spend more than $1 million annually - doubled from 500 in under two months (Sacra, April 2026). Customers spending over $100,000 a year have grown 7x in the past 12 months. Anthropic’s revenue mix is already 80% enterprise.

The math:

Global non-engineering knowledge worker comp pool: ~$27 trillion annually (Goldman, BLS, ILO)

AI captures ~10% of that work by 2030, priced at 30% of human cost

Anthropic share of the AI-enterprise vendor market: ~35% (vs OpenAI ~40%, Google/others ~25%)

$27T × 10% × 30% × 35% = $283B, call it $300B

The 10% capture rate by 2030 is the bit I’d want a bear to engage with - possible, maybe, plausible, definitely.

Other — $90 billion

The catch-all. Sovereign deals (with the Pentagon supply-chain risk caveat from March 2026 sitting here, not its own line). Cloud platform rev share through Bedrock, Vertex AI, and Foundry. OEM licensing (NVIDIA reference designs, Apple Intelligence-type deals). Vertical-specific products embedded in healthcare, legal, financial services under partner brands.

I’m deliberately not sizing each piece. $90B is a round number that says “this stuff is real, but I’m not going to pretend to break it down precisely.” For an article about defensibility, naming the residual rather than hiding it is the honest move.

So that’s $600 billion by 2030. $1 trillion lands in 2034.

The bottom-up build to 2030 gets us to $600 billion. The path to Anthropic’s stated $1T ambition runs another three to four years out - to roughly 2034.

What fills the gap from $600B to $1T? Two things, both straightforward:

The existing lines keep growing. A 2030 to 2034 trajectory at moderate growth rates (Claude Code from $180B to ~$280B as engineering capture moves from 20% to ~28%; Enterprise + Agentic from $300B to ~$470B; Consumer from $30B to ~$50B; Other to ~$140B) gets you most of the way there.

Physical AI emerges as a new revenue line. Robotics. Embodied agents. Multi-modal applications that don’t yet have business models attached to them. None of these are on the 2030 build because none are revenue-generating today. By 2032-33 they will be. By 2034 they’re plausibly a $50-80B line on their own. I’ll come back to physical AI specifically later in this series.

That’s the path from $600B to $1T. Not a stretch - a continuation of the same growth dynamics, plus one new product category that doesn’t yet contribute.

For now, the bear case maths on the 2030 build: take out any one of the four 2030 lines and the total still comes in at $300-570B, depending which one you cut. The supply-side argument - compute, power, memory - works at any of those numbers. That’s the part of the thesis I care about, and it’s where the rest of this series goes.

Two of the four lines (Claude Code, Enterprise + Agentic) sit on growth rates Anthropic is already running. One (Consumer) is the smallest and most easily compressed. One (Other) is the deliberately vague residual.

Each line has a clear failure mode. The conjunction produces $600B by 2030 and $1T by 2034. Not everything needs to fully deliver for the broader infrastructure trade to work.

Bears I take seriously

Four bear arguments I considered for this article. I’m including three. The fourth — “OpenAI, Google, Meta, xAI compete for the same enterprise budget and split it” — is actually a bull point dressed as a bear, since the supply-side infrastructure trade works whether one lab captures $1T or five labs split $1.5-2T. I’ll come back to that in the closing section.

The remaining three are bears that genuinely threaten the projection.

One — the distributed inference thesis

I saw @JigarShahDC make a compelling argument on twitter recently: if frontier training stops mattering and inference moves to the edge - smartphones, laptops, on-device chips - the moat around frontier labs compresses faster than enterprise contract terms allow them to reprice. On-device Llama variants and Apple Intelligence are early evidence. If most inference happens locally by 2028-29, Anthropic’s $200B+ in committed cloud compute starts looking like a stranded asset and the agentic services line halves.

My response. Even in that scenario, total inference compute requirements grow - the compute just shifts location. Smartphone-resident AI is incremental demand, not substitution, because the use cases (always-on personal agents, on-device privacy-preserving inference) are additive to the use cases that need server-side compute (multi-agent orchestration, very long context, frontier capability). And Anthropic’s enterprise mix is overwhelmingly server-side, where the moat is contract length, integration depth, and switching cost - not raw model quality.

For Shah’s argument to win, you need both edge inference to scale much faster than I expect and enterprise switching costs to compress materially. I think one of those happens. Both happening together would put the agentic line at $150B instead of $300B. This is a real risk, not a negligible one.

Two — the contracts are signed, the revenue might not arrive

The $380 billion in compute commitments doesn’t unwind if growth slows. Amazon doesn’t tear up a 10-year contract because Anthropic missed Q3. If the agentic services line underdelivers, Anthropic could end up at $400 billion ARR in 2030 against, say, $40 billion of annual compute opex - still profitable, but not the magnetic-attractor business case that justifies a $900 billion private valuation today.

My response. Revenue would have to fall short by more than 50% from the build above to break the underlying unit economics, given the 10-year amortisation on the Amazon side and the 5-year ramp on Google. Not impossible - particularly if the agentic line comes in at half my build ($150B instead of $300B) - but a heavy lift for the bears.

If I’m being explicit about probabilities, this is the bear scenario I assign the highest probability to. Maybe one-in-four. Not “Anthropic fails”, but “Anthropic ends up at $500-600B ARR in 2030 and the equity story compresses materially from here”. That’s a bear case I’d want positioned against, not one I’d dismiss.

Three — algorithmic efficiency flattens the curve

Mixture-of-experts, distillation, speculative decoding, edge inference. Every one has delivered 3-10x effective FLOPs gains per generation. If models keep getting more efficient, the implied compute spend (and therefore the implied revenue requirement) drops. Maybe the contracts are simply oversized for the world that actually arrives.

My response. Efficiency gains have historically expanded use cases rather than reducing total spend. Anthropic’s own inference gross margins moved from 38% to 70% over the last year as efficiency improved — and revenue still grew 5x. Cheaper tokens mean more tokens consumed, not fewer dollars spent. The economist’s name for this is the Jevons paradox; the investor’s name for it is “the obvious thing that everyone underestimates because the second-order effects compound”.

The bear here would need efficiency gains to outpace use case expansion for multiple generations in a row. Possible in a single generation. Hard to sustain across three or four.

What this means

If you buy the framework above - AGI by 2029, contracts that mathematically require $300B by 2030, a credible path to $600B on the existing product surface by 2030, and $1T by 2034 once physical AI starts contributing - then the rest of the AI infrastructure trade isn’t speculative. It’s arithmetic.

The aggregate platform layer - Anthropic plus OpenAI plus Google DeepMind plus Meta AI plus xAI plus Chinese frontier labs - is heading for $1.5-2 trillion in annual revenue by 2030. That implies a total AI market in the $5-8 trillion range, depending on how much value capture flows downstream of the model layer.

To deliver that revenue, the physical infrastructure has to scale from roughly 30 GW of global AI-dedicated compute today to somewhere between 130 and 200 GW by 2030. That’s a 4-7x increase in five years.

And the binding constraint on getting there isn’t capital. Capital is already committed and flowing. The binding constraint is the physical buildout. Power. Compute. Memory.

That’s where this series goes next. Three articles, three binding constraints, three sets of companies that own them.

Coming Thursday: what an $8 trillion AI market means for the rest of the economy. Subscribe to get it as soon as it drops.

The information provided in this article is for informational and educational purposes only and does not constitute financial, investment, or professional advice. Harry at Root Logic is not a licensed financial advisor, and the content is based on publicly available data, personal analysis, and opinions.